This post will do all the steps required to set up dynamic volume provisioning using NFS. We will be using a fresh Kubernetes cluster with no storage class provisioned. Unlike cloud environments, there are no storage provisioners available in the home environment by default. Sure, you can have it, but you need to configure it manually.

What is static storage provisioning?

Without dynamic volume provisioning, cluster-admins were supposed to create many volumes in advance. Then, whenever a user creates a pod requesting a volume, the pod will consume one pre-created volume from the pool of volumes earlier created by cluster-admin. You may have guessed that this way of working has infinite disadvantages, including manual volume creation and guesswork.

What is dynamic storage provisioning?

Unlike static volume provisioning, when a user creates a pod requesting volume, it will be created automatically during the creation of the pod. The dynamic volume would eliminate the manual volume creation and guesswork. It is a clever and efficient use of storage. Dynamic volume provisioning(magic) works in Kubernetes via several supported by Kubernetes. When writing this post, the following plugins are supported by Kubernetes. In the following list of plugins, we can guess by the names of these plugins: a few are for the cloud environments, a few are for on-prem, and some are for both. In the home environment, the easiest to implement are hostPath, local, and NFS. we will be talking about NFS in this post.

awsElasticBlockStore - AWS Elastic Block Store (EBS)

azureDisk - Azure Disk

azureFile - Azure File

cephfs - CephFS volume

csi - Container Storage Interface (CSI)

fc - Fibre Channel (FC) storage

gcePersistentDisk - GCE Persistent Disk

glusterfs - Glusterfs volume

hostPath - HostPath volume (for single node testing only; WILL NOT WORK in a multi-node cluster; consider using local volume instead)

iscsi - iSCSI (SCSI over IP) storage

local - local storage devices mounted on nodes.

nfs - Network File System (NFS) storage

portworxVolume - Portworx volume

rbd - Rados Block Device (RBD) volume

vsphereVolume - vSphere VMDK volume

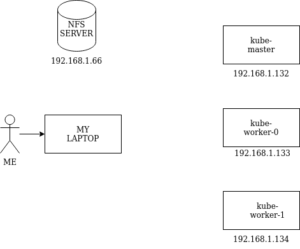

I have 3 node Kubernetes cluster running in my lab. The following are the node, role and their IP addressed.

On the NFS-Server node:

Create a directory where all the NFS-clients will be storing their data.

sudo mkdir -p "/nfs_exported"

export export_dir="/nfs_exported"Now install and start NFS-server on the NFS-server node:

sudo apt update

sudo apt install nfs-kernel-serversudo systemctl start nfs-kernel-server.service

sudo exportfs -ar

Create an Array of NFS-clients

This is a list of nodes I want to have access to the NFS server.

IPS=(192.168.1.132 192.168.1.133 192.168.1.134)

#Alternatively, Here is a hack to get the $IPS array populated automatically, unless you want to do it manually

IFS=$' ' read -r -d '' -a IPS < <( kubectl get nodes -o jsonpath='{.items[*].status.addresses[?(@.type=="InternalIP")].address}' && printf '\0' )

Add the client details in /etc/exports

for node in "${IPS[@]}";do

echo "${export_dir} ${node}(rw,no_subtree_check,no_root_squash)" >> /etc/exports

done

On the NFS-Clients node:

Install the NFS client on all the nfs client nodes:

sudo apt install nfs-commonFrom any node having kubectl/helm access to kubernetes:

Install helm if not already present:

$ curl -fsSL -o get_helm.sh https://raw.githubusercontent.com/helm/helm/main/scripts/get-helm-3

$ chmod 700 get_helm.sh

$ ./get_helm.sh

Add the nfs-subdir-external-provisioner helm chart:

helm repo add nfs-subdir-external-provisioner https://kubernetes-sigs.github.io/nfs-subdir-external-provisioner/

"nfs-subdir-external-provisioner" has been added to your repositories

Installing the nfs-subdir-external-provisioner chart:

helm install nfs-subdir-external-provisioner nfs-subdir-external-provisioner/nfs-subdir-external-provisioner \

--set nfs.server=192.168.1.66 \

--set nfs.path=/nfs_exported \

--create-namespace \

--namespace nfs-prov

NAME: nfs-subdir-external-provisioner

LAST DEPLOYED: Tue Feb 15 14:12:14 2022

NAMESPACE: nfs-prov

STATUS: deployed

REVISION: 1

TEST SUITE: None

Verify the installation status:

helm list -n nfs-prov

NAME NAMESPACE REVISION UPDATED STATUS CHART APP VERSION

nfs-subdir-external-provisioner nfs-prov 1 2022-02-15 14:12:14.804699104 -0600 CST deployed nfs-subdir-external-provisioner-4.0.16 4.0.2

kubectl get pod -n nfs-prov

NAME READY STATUS RESTARTS AGE

nfs-subdir-external-provisioner-58d8d9bd6c-gv4w4 1/1 Running 0 65skubectl get sc

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE

nfs-client cluster.local/nfs-subdir-external-provisioner Delete Immediate true 23m

You can verify that the exported directory is present on the NFS-server and also in the POD. Run the following command in the NFS-Server. Notice the prefix “Archived” in the directory name, the volume in the NFS server will be renamed with prefix “Archived” when the pod is deleted. This is done to get the persistence.

ls -lrt /nfs_exported/

total 8

drwxrwxrwx 2 root root 4096 Mar 30 11:15 archived-default-myclaim-pvc-8749df7f-037e-4987-9a24-6a6ab3b3ae40

drwxrwxrwx 2 root root 4096 Mar 30 11:30 default-myclaim-pvc-618e1b0b-d894-4cb5-b9d6-d3b091f2c713

You can now run another test(optional) to check the sync between the NFS-Server’s content and the client(pod). Here we are writing some text to a shared mountpoint within the container.

kubectl exec -it mypod -- bash -c 'echo "this is written inside pod" > /var/www/html/foo'Now, verify that the content is synced in the NFS-Server. Read the file from the NFW-Server.

cat default-myclaim-pvc-618e1b0b-d894-4cb5-b9d6-d3b091f2c713/foo

this is written inside pod

Time to test the changes:

Let’s create a persistent volume claim to consume it in a pod.

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: myclaim

spec:

storageClassName: nfs-client

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 2Gi

EOF

Now create a Pod using above volume claim:

cat << EOF | kubectl create -f -

apiVersion: v1

kind: Pod

metadata:

name: mypod

spec:

containers:

- name: myfrontend

image: nginx

volumeMounts:

- mountPath: "/var/www/html"

name: mypd

volumes:

- name: mypd

persistentVolumeClaim:

claimName: myclaim

EOF

Verify that the Pod is in Running state:

kubectl get pod

NAME READY STATUS RESTARTS AGE

mypod 1/1 Running 0 136m

Check PV and PVC status:

∅ Notice that we have never created the PV, however it is automatically created.(MAGIC)

kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

myclaim Bound pvc-bb6af28a-9f18-4b8e-be4c-9ec4d5f08f84 2Gi RWO nfs-client 136m

kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

pvc-bb6af28a-9f18-4b8e-be4c-9ec4d5f08f84 2Gi RWO Delete Bound default/myclaim nfs-client 137m

Now exec to the pod and create a test file within the pod’s mount path:(/var//www/html)

ps@controller:~$ kubectl exec -it mypod -- bash

root@mypod:/# cd /var/www/html/

root@mypod:/var/www/html#

root@mypod:/var/www/html# echo "I am writing this from within the pod" > myfile

root@mypod:/var/www/html# cat myfile

I am writing this from within the pod

root@mypod:/var/www/html#

Login to your NFS Server(192.168.1.66):

Go back to your exported path, which you have exported by adding entry in /etc/exports file of nfs-server node. you can see the file created inside the POD is present in the NFS-server. You may make this available to other pods.

ps@nfsserver:/nfs_exported$ ls -lrt

total 4

drwxrwxrwx 2 root root 4096 Feb 15 14:41 default-myclaim-pvc-bb6af28a-9f18-4b8e-be4c-9ec4d5f08f84

ps@nfsserver:/nfs_exported$

ps@nfsserver:/nfs_exported$ cd default-myclaim-pvc-bb6af28a-9f18-4b8e-be4c-9ec4d5f08f84

ps@nfsserver:/nfs_exported/default-myclaim-pvc-bb6af28a-9f18-4b8e-be4c-9ec4d5f08f84$ ls -lrt

total 4

-rw-r--r-- 1 root root 38 Feb 15 16:58 myfile

ps@nfsserver:/nfs_exported/default-myclaim-pvc-bb6af28a-9f18-4b8e-be4c-9ec4d5f08f84$ cat myfile

I am writing this from within the pod

ps@nfsserver:/nfs_exported/default-myclaim-pvc-bb6af28a-9f18-4b8e-be4c-9ec4d5f08f84$

References:

- https://github.com/kubernetes-sigs/nfs-subdir-external-provisioner

- https://ubuntu.com/server/docs/service-nfs